Clinical Decision Making

|

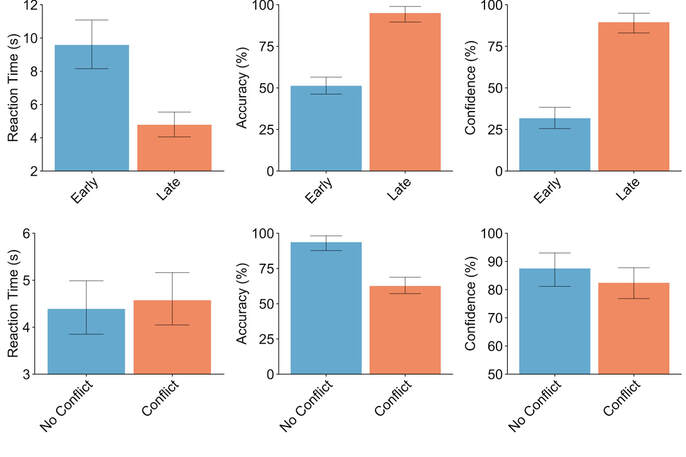

As mentioned in the theoretical research section, one of my research focuses is the dual process thoery of decision-making. Interestingly, this theory lends itself well to the world of clinical decision-making. Everyday, clinicians make erroneous decisions that lead to misdiagnoses. Some have related a large portion of these errors to a failure to overcome existing biases when assessing unique cases. In the context of dual process decision making, this is a failure to engage System 2 when System 1 is insufficient for success. My honours thesis aimed to capture this process using portable EEG techniques, in an effort to directly measure decision making strategies in clinicians. On the right, you can see preliminary results from this study. As seen in behavioural results, participants formed biases about disease criteria when learning to diagnose patients. However, when faced with rare cases that had dissimilar values from the onese they learned, participants could not engage System 2 thinking to formulate a correct diagnosis. Indeed, despite a decrease in accuracy, participants had relatively unchanged reaction times and confidence in their decisions. This indicates a failure to overcome biases, even when aware of the innacuracy they may cause. Results are further supported by frequency data, which showed no significant differences in Theta or Beta activity between rare and typical cases, confirming participants who incorrectly diagnosed rare cases were unable to think analytically. Overall, our results highlight the need for intervention in clinical decision-making, especially surrounding the prevalence of biases in diagnoses.

Some recommended readings related to my work: Crosskerry, P. (2009). A Universal Model of Diagnostic Reasoning. Norman, G.R. et al. (2017). The Causes of Errors in Clinical Reasoning: Cognitive Biases, Knowledge Deficits, and Dual Process Thinking. |

Behavioural data showing reaction time, accuracy, and subjective confidence rating for the learning phase (top) and conflict phase (bottom). These results show that while participants were able to learn to diagnose typical cases (evident from top row of data), they were adherent to biases when diagnosing rare, or conflicting, cases and unable to maintain accuracy (bottom row of data).

|

Cognitive Fatigue

|

Cogntive fatigue and "burnout" are becoming increasingly stressed parts of our daily lives. How many people don't even know how mentally tired they are? How many people work dangerous jobs while cognitively fatigued? These are important questions that we are hoping to answer. Our primary approach has been with the MUSE portable EEG headband, which allows us to do portable assessments of different aspects of cognition. In the past year, we have made huge strides in turning this into fatigue assessment. Specifically, I have done field testing of working emergency room doctors and nurses, athletes, and even the general population going about their day. Our data has produced specific methods for objectively quantifying cognitive fatigue with EEG signals. This work has been submitted to Frontiers in Neuroscience. Further, I have presented some of software for these techinques at various technology showcases, for more information on these presentations click here.

Some recommended readings related to my work: Walker (2008) - Cognitive consequences of sleep and sleep loss. Katner et al. (2014) - Effects of mental workload and fatigue on the P300, alpha and theta band power during operation of an ERP (P300) brain–computer interface. |

Research Methods-Portable EEG

|

As part of my 2019 NSERC USRA, I spent time testing the research viability of various portable research tools. This area of my research speaks to one of my career goals as a scientist; commit to moving decades of theoretical, lab-based research practices into the real world. With portable EEG measurement tools like the MUSE EEG headband, OpenBCI Ganglion EEG board, and the Cogniocs Quick-8r headset, we can achieve this knowlede translation of neuroscience research. For example, what happens when astronauts stuck on mars need to measure their cognitive fatigue before emarking on dangerous missions? How can we use neuroscience to help sports teams improve the performance of their players? My work involves testing the aforementioned research tools' ability to answer these questions, and then building the best protocols to do this. As a part of my 2020 NSERC USRA, I plan to develop a better ocular artifact rejection method for portable EEG devices, improving the data quality of field research. Overall, I am firmly dedicated to showing the world the neuroscience is more than researchers in a lab, and what we do can improve the lives of many different populations. These projects, especially the OpenBCI, also taught me applied work also comes back around to direct theoreticlal work. Applied cases drive the need for theoretical work, which in turn feeds into more applied uses. In terms of the OpenBCI system, it started as finding a strong tool for portable EEG recording. However, I also began to appreciate how one day this same system may be more reasonable for theoretical experiments. In summary, I now understand that the relationship between theoretical and applied work is actually a two-way street.

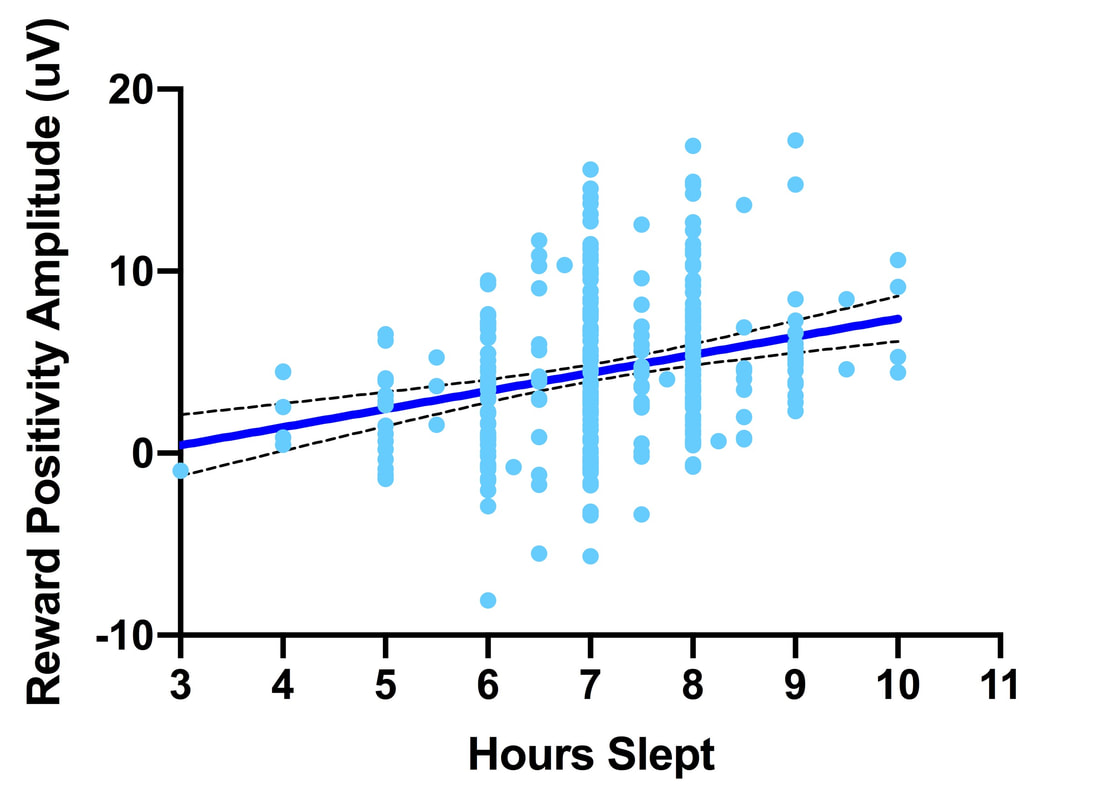

Some readings related to my work: Qiu, Casey, & Diamond (2019) - Assessing Feedback Response With a Wearable Electroencephalography System Krigolson et al. (2017) - Choosing MUSE: Validation of a Low-Cost, Portable EEG System for ERP Research |

Here is the interface for the OpenBCI system, capturing some sample data I recorded. The important factors are that this system can record research-quality raw EEG signals (top right) and instantaneously turn them into frequency bands (top left). When we assess EEG data for in-lab studies, the main thing we look at are Event-Related Potentials from raw EEG and time-frequency wavelets from frequency bands. As such, the OpenBCI system can help us do both while being extremely portable.

|

Tutorial Videos

Another one of my goals as a scientist is commiting to open science. As such, I have begun working to clarify the methods we use for data analysis and collection. Linked below are various tutorial videos I made for conducting MUSE research, both as a tool for new students in our lab and disseminating our methods to the public. Check them out! It is important to note I do not take credit for any of the software or hardware showcased in the videos, I simply show how to use them. Finally, I also prevent results from analysis of over 1000 MUSE participants to showcase different analysis parameters. Analyzing this data set was painful, but taught me about data structure and appreciating why we have certain industry strandards for analysis. I understand how daunting it can be to jump into a new research project with novel tech. I hope that these videos can help you get started!